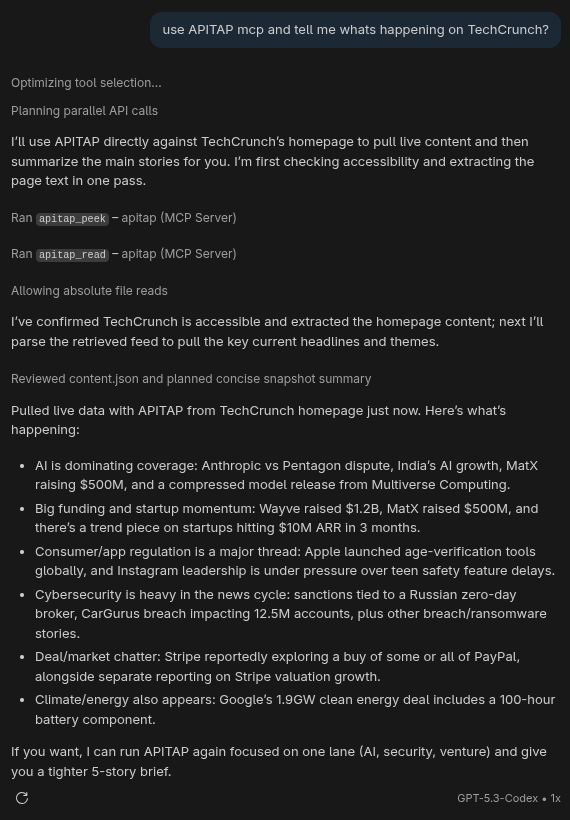

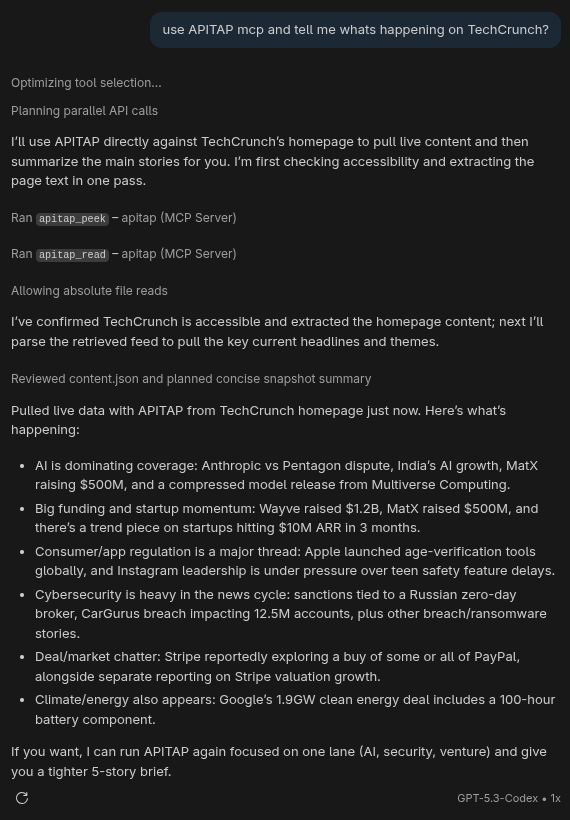

Real numbers. Real sites.

Demo: TechCrunch in One Command

apitap read https://news.ycombinator.com/ yourself and count the tokens.

Every website has an API powering it. ApiTap finds it, captures it, and lets your AI agent call it directly — no browser, no scraping, no DOM. Just structured JSON at a fraction of the token cost.

$ npm install -g @apitap/core

claude mcp add -s user apitap -- apitap-mcp

That's not an optimization. That's a different architecture.

Point ApiTap at any site. It opens a browser, watches network traffic, and identifies real API endpoints — filtering out analytics, tracking pixels, and framework noise.

→A portable JSON map of the site's API. Parameterized URLs, auth tokens encrypted at rest, HMAC-signed to prevent tampering. Share it, version it, commit it.

→Your agent reads the skill file and calls the API directly with fetch(). No Chrome. No DOM. No flaky selectors. Just structured data.

apitap read https://news.ycombinator.com/ yourself and count the tokens.

Optional Chrome extension. Install it and it silently builds a map of every API you visit — no infobar, no performance hit. Banking, login pages, and payment flows are blocked at collection time — never observed, never stored. For everything else, the index keeps endpoint shapes only: never header values, never query param values, never auth tokens.

As you use Discord, Spotify, Notion — the extension silently records endpoint shapes, auth types, and pagination patterns. No capture step. No button to click.

Agents query the index before capturing. The apitap_discover MCP tool answers "what do you know about X?" instantly, with zero runtime overhead.

When a full skill file is needed, the extension briefly attaches, captures response shapes, then detaches. You approve once. It never runs unattended by default.

Already have Chrome open with signed-in sessions? Enable remote debugging in one click, then apitap attach passively captures across all tabs — OAuth redirects, SSO flows, multi-domain auth. No extension required.

1427 tests. Defense in depth. Because your agent shouldn't be a liability.

The web was built for human eyes; ApiTap makes it native to machines.